Executive Summary

- Who this is for: CIOs, CTOs, Enterprise Architects, AI Transformation Leaders

- Problem it solves: Confusion around LLM, RAG, Agents, and MCP leading to unstable AI initiatives

- Key outcomes: Clear architectural layering, reduced AI sprawl, improved governance control

- Time to implement: 60-90 days for structured AI capability alignment

- Business impact: Lower experimentation waste, controlled cost growth, safer AI scaling

The Enterprise AI Confusion Problem

Most organizations say:

"We need an LLM."

"Let's build an AI Agent."

"Add RAG."

"Use MCP."

Pilots begin.

Budgets are allocated.

Six months later:

- AI answers are inconsistent

- Costs fluctuate

- Security reviews slow progress

- Leadership questions ROI

The problem is not intelligence.

The problem is architectural clarity.

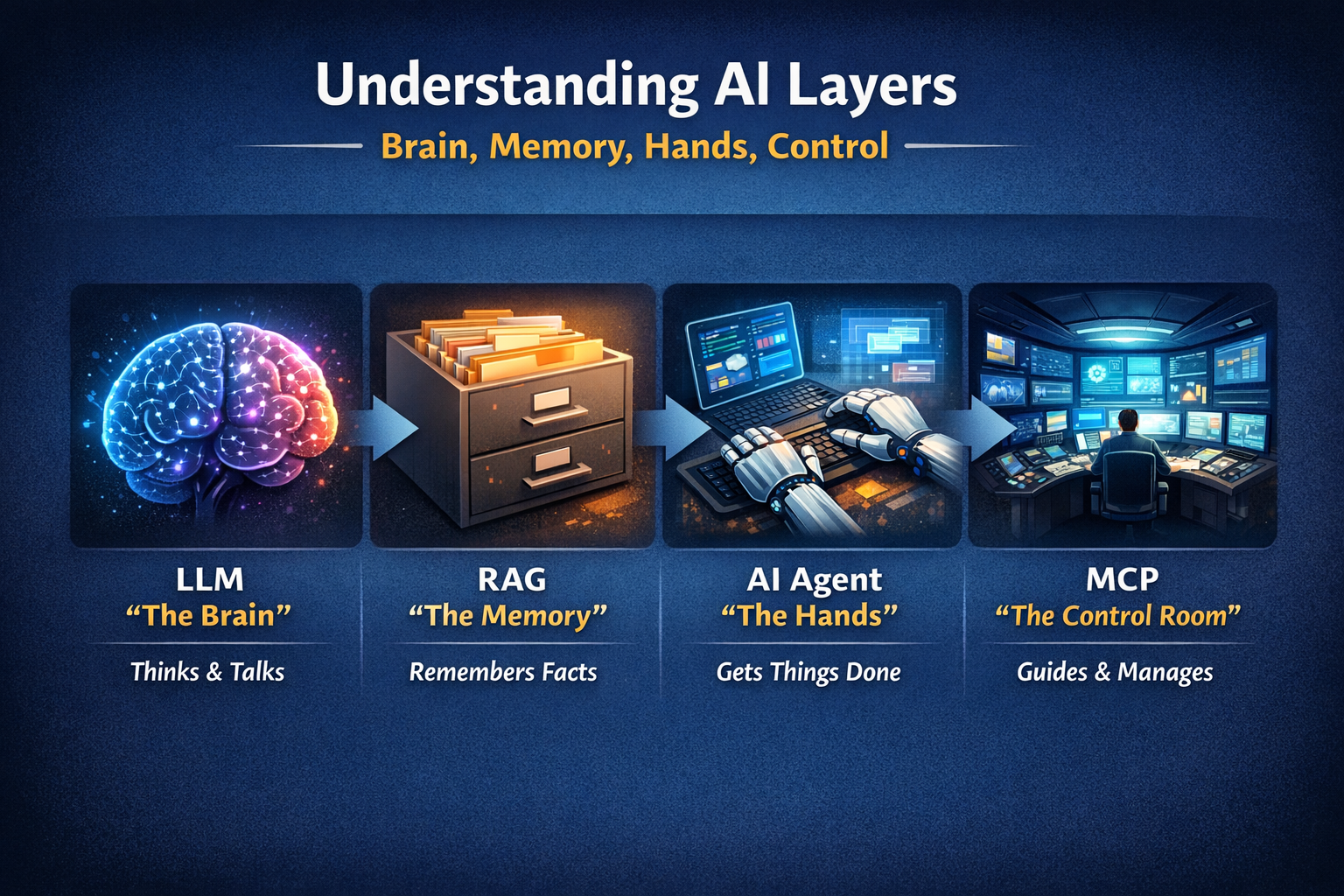

The Brain Model of Enterprise AI

Every modern AI system can be understood using one simple metaphor:

Brain. Memory. Hands. Control.

If you understand these four, you understand modern AI architecture.

1. LLM = The Brain

An LLM is a brain.

It can:

- Think

- Write

- Explain

- Summarize

- Reason

It cannot:

- Access your private company data (by default)

- Press buttons

- Update systems

- Send emails

It only thinks.

Strategic insight:

LLM capability alone introduces minimal operational risk but limited enterprise value without context.

2. RAG = The Memory

Now give the brain memory.

RAG allows the model to:

- Look up internal documents

- Reference policies

- Retrieve knowledge base articles

- Use enterprise data before answering

Without memory → The brain guesses.

With memory → The brain checks first.

RAG does not make the brain smarter.

It makes it grounded.

Strategic insight:

RAG reduces hallucination risk but introduces data governance responsibility.

3. AI Agent = The Hands

Now give the brain hands.

An Agent can:

- Call APIs

- Send emails

- Update CRM records

- Trigger workflows

- Approve requests

Now the brain does not just think.

It acts.

This is where risk increases.

Advice is safe.

Action has consequences.

Strategic insight:

Agent deployment requires operational maturity and governance discipline.

4. MCP = The Control System

If the brain has hands, it needs control.

MCP (Model Context Protocol) standardizes how the brain talks to tools.

It defines:

- How tools are described

- How context is passed

- How capabilities are exposed

- How communication is structured

MCP is not intelligence.

It is not business rules.

It is structured communication that enables governance.

Strategic insight:

Without a control layer, agents become fragmented and difficult to audit.

The Enterprise AI Layering Framework

Modern AI systems should be classified into four layers:

- Reasoning (Brain)

- Knowledge (Memory)

- Action (Hands)

- Control (MCP)

Confusing these layers leads to instability.

Scaling without control increases risk.

Implementation Guide (90 Days)

Phase 1: Inventory (Weeks 1--3)

Objective: Map your AI footprint

Activities:

- List all LLM usage

- Identify RAG pipelines

- Audit agent tool access

- Classify autonomy levels

Success Metric: Complete AI capability map

Phase 2: Boundary Definition (Weeks 4--6)

Objective: Separate thinking from doing

Activities:

- Restrict agent permissions

- Standardize retrieval pipelines

- Introduce tool exposure standards

- Define approval checkpoints

Success Metric: No autonomous agent without defined boundary

Phase 3: Governance Integration (Weeks 7--12)

Objective: Institutionalize AI architecture discipline

Activities:

- Introduce AI architecture review board

- Log tool usage

- Track cost per workflow

- Align AI with enterprise architecture governance

Success Metric: AI governed like any core platform

Resource Estimate:

- Enterprise Architect

- AI Engineer

- Security & Compliance representation

Evidence from Practice

The Challenge

In a prior enterprise environment, multiple teams launched AI initiatives independently.

Some used only LLMs.

Some added retrieval.

Some deployed autonomous agents.

There was no unified architectural classification.

Cost grew unpredictably.

Security escalated concerns.

Executives hesitated to scale.

The Approach

We simplified everything into four categories:

Brain.

Memory.

Hands.

Control.

Agent autonomy was reduced until governance was structured.

Tool exposure was standardized.

Architectural review was formalized.

The Results

Within months:

- AI cost variance reduced

- Compliance approvals accelerated

- Operational stability improved

- Executive confidence increased

Clarity restored control.

Action Plan

This Week

List every AI initiative.

Label each as:

- Brain only

- Brain + Memory

- Brain + Memory + Hands

If control is unclear, you have architectural risk.

Next 30 Days

Run a structured AI layering workshop.

Define governance boundaries for agent capabilities.

3-6 Months

Integrate AI layering into enterprise architecture governance.

Conduct quarterly AI structural audits.

Align AI roadmap with operational maturity.

Final Thought

LLM thinks.

RAG remembers.

Agent acts.

MCP structures.

Enterprise AI instability does not come from intelligence limits.

It comes from architectural ambiguity.

Scale intelligence only after you structure it.

Next Step

If your organization is scaling AI without clear architectural layering

→ Book a 30-minute strategy consultation

AI transformation succeeds when intelligence is governed by structure.